Artificial intelligence is not just accelerating software development – it is fundamentally changing where engineering effort, risk, and expertise are concentrated. For decades, the primary bottleneck in software development was code production. Today, with tools like GitHub Copilot, ChatGPT, and CodeWhisperer, generating syntactically correct code has become dramatically faster and easier. Stack Overflow’s 2024 survey reports that over 60% of developers already use AI coding tools, and adoption continues to accelerate across organizations of all sizes.

However, increased code generation speed does not automatically translate into better software. In real-world engineering environments, the bottleneck is shifting from writing code to validating, integrating, and maintaining it. As AI reduces the cost of producing code, the relative importance of architectural judgment, system design, and long-term ownership increases.

This shift creates a paradox. AI tools can significantly improve developer productivity in the short term, particularly for repetitive and well-understood tasks. But they can also introduce hidden risks: increased code churn, architectural inconsistency, security exposure, and technical debt that only becomes visible months later. The result is that AI does not eliminate the need for experienced engineers – it makes their judgment more critical than ever.

Based on both independent research and our experience delivering complex embedded, desktop, and cross-platform systems, the future of software development is not AI-driven, but AI-augmented. Organizations that treat AI as a replacement for engineering expertise risk accumulating invisible fragility. Those that treat it as an amplifier for strong engineering teams gain a meaningful competitive advantage.

In this article, we examine the real trade-offs between AI-driven and traditional coding, what current research reveals about productivity and quality, and why human system ownership remains the defining factor in building reliable, scalable software.

- 1. The Philosophy of Coding: More Than Just Code

- 2. What AI Code Assistants Actually Do Well

- 3. AI-Driven vs. Traditional Coding: A Structured Comparison

- 4. What the Research Actually Says: The Code Quality Problem

- 5. What We Observed in Real Engineering Projects

- 6. Why AI Cannot Replace Human Engineers: The Core Argument

- 7. The Developex Approach: AI as an Engineering Amplifier, Not a Replacement

- 8. Practical Recommendations for Engineering Leaders

- Final Thoughts: The Real Future of Software Development

1. The Philosophy of Coding: More Than Just Code

Traditional coding is often misunderstood as a mechanical activity: translating specifications into lines of code. In reality, it is closer to engineering design or even applied philosophy. Developers constantly interpret incomplete information, make assumptions, and embed decisions that shape how systems behave for years. Code is not just an implementation artifact; it is a long-term representation of business logic, institutional knowledge, and strategic intent.

Every serious software project involves trade-offs between performance, scalability, security, maintainability, and user experience. These decisions require contextual understanding: knowledge of business goals, regulatory constraints, organizational capabilities, and human behavior. No matter how advanced AI becomes, it does not “understand” purpose in the same way humans do. It statistically predicts what code should look like based on training data, but it does not reason about why a system should exist or how it should evolve.

This distinction is fundamental. AI-generated code may be syntactically valid and even functionally correct, but correctness in software is rarely binary. The real question is whether the system behaves correctly under edge cases, future requirements, security threats, and operational stress. These dimensions emerge from human judgment, not from pattern prediction.

In this sense, coding is less about producing code and more about shaping systems. AI can assist in production, but it cannot replace the responsibility of system ownership.

2. What AI Code Assistants Actually Do Well

AI-driven coding tools excel at pattern recognition. They are extremely good at generating boilerplate, suggesting standard implementations, and accelerating repetitive tasks. For many teams, this leads to tangible productivity gains, especially in early-stage development and routine engineering work.

In practice, AI code assistants bring several real advantages:

- Faster prototyping and proof-of-concept development

- Reduced time spent on repetitive or low-complexity tasks

- Instant access to examples and best practices

- Improved onboarding for junior developers

- Increased developer flow and reduced cognitive load

These benefits are not theoretical. Multiple studies show that developers using AI tools complete tasks faster and report higher perceived productivity. For organizations under pressure to reduce time-to-market, this is a compelling value proposition. AI effectively acts as a “second pair of hands,” enabling engineers to move faster without sacrificing momentum.

However, speed alone is not quality. And this is where the limitations start to become visible.

3. AI-Driven vs. Traditional Coding: A Structured Comparison

Understanding the trade-offs between these two paradigms requires looking across several dimensions simultaneously – from productivity and cost to security, maintainability, and team dynamics. The table below captures the most relevant dimensions for engineering leaders making strategic decisions.

| Dimension | AI-Driven Coding | Traditional Coding |

|---|---|---|

| Development Speed | High for boilerplate and routine tasks; variable for complex logic | Slower initial output; consistent for complex architectural work |

| Code Quality | Inconsistent; strong for standard patterns, weak for edge cases and business-specific logic | High when led by experienced engineers with clear standards |

| Maintainability | Risk of technical debt accumulation; code may lack contextual coherence | Easier to maintain when written by engineers who understand full system context |

| Security | Documented risk of reproducing known vulnerabilities from training data | Dependent on team practices; human review catches context-specific risks |

| Learning Curve | Low for tool adoption; hidden complexity in validating AI output | High entry barrier; deep expertise compounds over time |

| Cost Profile | Lower short-term output cost; potential long-term costs from rework and debt | Higher upfront; typically lower lifecycle cost for complex systems |

| Domain Adaptation | Limited; AI lacks knowledge of proprietary systems and business logic | Strong; engineers internalize domain context over time |

| Accountability | Ambiguous; ownership of AI-generated code requires active effort | Clear; engineers own and understand what they write |

| Innovation Capacity | Pattern reproduction; struggles with genuinely novel problems | High; human creativity drives architectural breakthroughs |

No single row in that table tells the full story. The real insight is in the interaction between dimensions: AI tools accelerate speed but create accountability gaps; they reduce boilerplate cost but can inflate lifecycle cost through debt; they lower the barrier to writing code while simultaneously raising the stakes for engineering judgment.

4. What the Research Actually Says: The Code Quality Problem

This is where the conversation gets uncomfortable for AI tool vendors – and where engineering leaders need to pay close attention. The most rigorous independent research on AI-generated code quality raises serious concerns that deserve honest examination.

GitClear, an independent code analytics firm, published a landmark study in 2024 analyzing over 153 million lines of code across a four-year period to measure the actual impact of AI coding tools on code quality metrics. The findings were striking and, for many, alarming. The study found that code churn – the percentage of lines written that are deleted or significantly modified within two weeks – doubled between 2022 and 2024, a period that corresponds precisely with the mass adoption of AI coding assistants. Churn is a reliable proxy for code quality: high churn means code is being written that doesn’t last, code that creates rework burden and contributes to technical debt.

Even more concerning, GitClear’s analysis found that code “moves” – a metric tracking how often code is relocated within a codebase, which correlates with healthy refactoring and thoughtful architectural maintenance – declined significantly over the same period. This suggests that AI-assisted teams are writing more code but doing less of the structural thinking that keeps codebases healthy over time. The research team summarized their concern plainly: AI tools appear to optimize for code generation at the expense of code quality.

“Code written with AI assistance shows higher churn rates, suggesting it’s more likely to be ‘written once and thrown away’ – which is fine for prototyping, but dangerous for production systems.” – GitClear: AI Assistant Code Quality Research, 2024

Beyond GitClear’s structural findings, security researchers have documented a separate category of risk. A 2023 study from Stanford University found that developers who used AI coding assistants wrote code with significantly more security vulnerabilities than those who did not – and, perhaps more worryingly, were more confident that their code was secure. AI tools trained on public repositories reproduce patterns from that training data, including known vulnerabilities. Without rigorous security review processes, AI-generated code can introduce CVE-class issues that automated linters miss entirely.

There is also the problem of ‘hallucinated’ dependencies – AI tools that suggest package names that don’t exist, or that exist under different names and may be typo-squatted by malicious actors. This is not a theoretical risk. Security teams at large enterprises have flagged real incidents where AI-suggested dependencies introduced supply chain exposure. The lesson is not to avoid AI tools; it is to never deploy AI-generated code without the same review rigor you would apply to any other code.

5. What We Observed in Real Engineering Projects

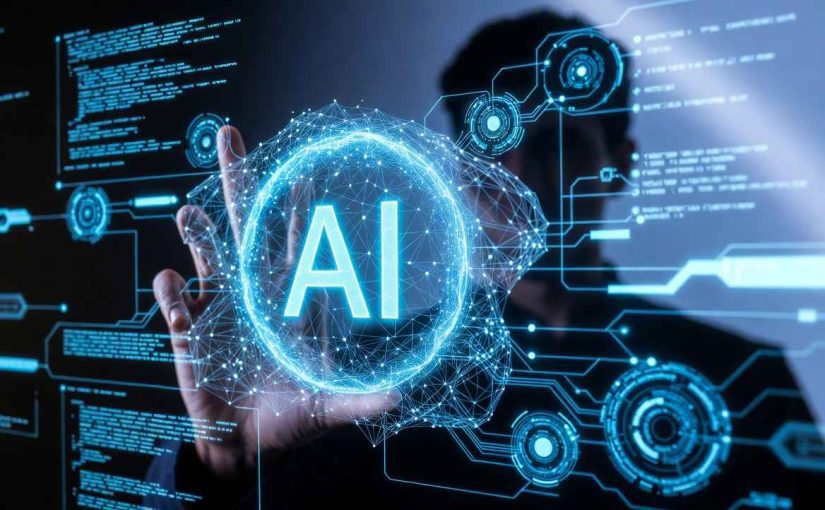

While industry research provides valuable macro-level insights, the practical impact of AI coding tools becomes clearer when observed inside real engineering workflows. Across our embedded, desktop, and cross-platform development projects, we have seen consistent patterns emerge – both positive and cautionary.

AI significantly accelerates initial implementation – but not final delivery.

In many cases, AI tools reduce the time required to produce initial code drafts, particularly for standard components, interface layers, and test scaffolding. This accelerates early development phases and helps teams move faster from concept to working prototype. However, the time saved during initial generation is often partially offset by the need for careful validation, integration, and refactoring to ensure consistency with system architecture and performance requirements.

AI shifts effort from code creation to code validation.

Traditionally, senior engineers spent most of their time writing and refining complex logic. With AI assistance, more time is now spent reviewing generated code, verifying assumptions, and ensuring architectural alignment. This changes the nature of engineering work: productivity gains depend not only on generation speed, but on the team’s ability to efficiently evaluate and integrate AI-generated output.

Architectural coherence becomes a critical risk factor.

AI tools generate code at the level of individual functions or modules, without full awareness of system-wide design decisions. In larger codebases, this can lead to subtle inconsistencies in patterns, abstractions, or error-handling approaches. Left unaddressed, these inconsistencies can accumulate into technical debt that reduces maintainability over time. Strong architectural oversight remains essential to preserve long-term system integrity.

Senior engineering expertise becomes more – not less – important.

One of the most counterintuitive effects of AI adoption is that it increases the leverage of experienced engineers. As code generation becomes easier, the limiting factor shifts to system design, decision-making, and long-term ownership. Senior engineers play a critical role in guiding architecture, validating AI output, and ensuring that development speed does not compromise reliability.

AI delivers the greatest value in well-defined, bounded problem areas.

The most reliable productivity gains occur when AI is applied to tasks with clear requirements and established patterns: test generation, documentation, interface definitions, and standard integrations. In contrast, tasks involving novel architectures, performance-critical systems, hardware interaction, or domain-specific logic still require deep human expertise and careful engineering judgment.

These observations reinforce a key conclusion: AI is most effective when used as a tool within disciplined engineering processes, not as a replacement for them. Organizations that combine AI acceleration with strong architectural ownership and engineering rigor achieve meaningful productivity gains without compromising long-term system quality.

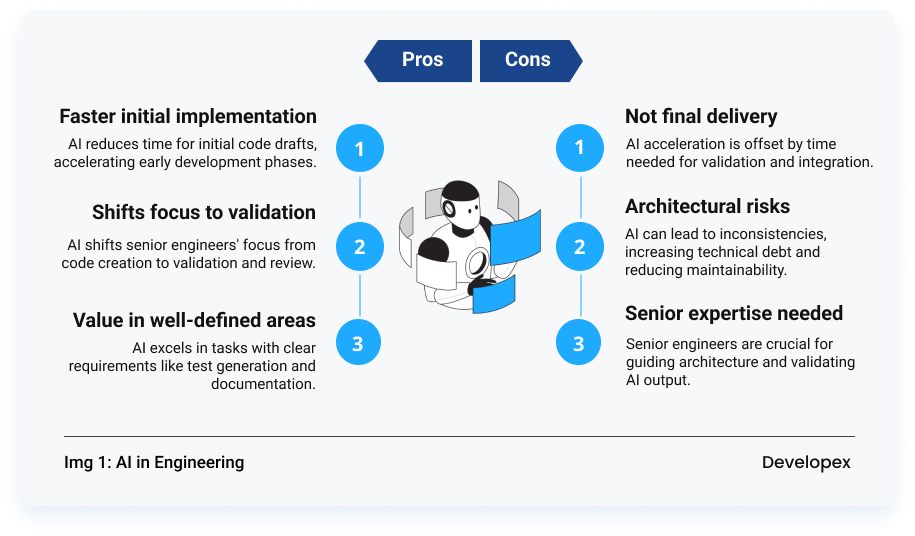

6. Why AI Cannot Replace Human Engineers: The Core Argument

The case for human engineers is not nostalgic or defensive. It is grounded in a clear-eyed understanding of what software development actually is when done well. Writing code is the easy part. The hard part is everything else.

6.1 Systems Thinking at Scale

Software systems are not collections of functions. They are architectures – interconnected decisions about data flow, failure boundaries, scalability constraints, and evolution paths. AI tools operate at the level of individual code blocks. They have no model of your system’s history, your team’s constraints, or your business’s five-year roadmap. Architectural decisions require a human being who can hold all of that context simultaneously and reason about second and third-order consequences.

6.2 Business Domain Comprehension

The most valuable software solves specific problems in specific domains. A fintech platform handles regulatory nuance differently from an e-commerce engine. A healthcare application operates under compliance requirements that shape every data model and API contract. AI tools have no access to your proprietary business logic, your contractual obligations, or the implicit knowledge that your senior engineers have accumulated over years of working with your customers. Domain expertise is not replicable from public training data.

6.3 Accountability and Ownership

When a production system fails at 3 AM, someone needs to understand it deeply enough to diagnose and fix it under pressure. AI tools do not take on-call rotations. They do not hold post-mortems. They cannot be held accountable for the code they produce. The engineer who owns a system – who wrote it, reviewed it, refactored it, and knows its failure modes – is irreplaceable in that moment. Organizations that allow AI tools to erode that sense of deep ownership are creating operational risk that may not become visible until a critical incident.

6.4 Ethical and Strategic Judgment

Software increasingly makes decisions that affect people’s lives – credit approvals, medical diagnoses, hiring recommendations, content moderation. The engineers who build these systems bear responsibility for the choices embedded in them: what data to use, what to optimize for, what edge cases to handle and how. AI tools have no ethical framework. They reproduce patterns from training data without evaluating whether those patterns are fair, appropriate, or aligned with your organization’s values. Human judgment is not optional in this domain; it is the point.

6.5 Creative Problem-Solving and Innovation

The most consequential engineering work – the architectural breakthrough, the novel algorithm, the product insight that creates a new category – does not emerge from pattern completion. It emerges from human curiosity, cross-domain synthesis, and the willingness to challenge assumptions. AI tools are extraordinarily good at recombining what already exists. They are not good at inventing what doesn’t. If your competitive advantage depends on genuine technical innovation, you need engineers who can think beyond the training data.

7. The Developex Approach: AI as an Engineering Amplifier, Not a Replacement

At Developex, we integrate AI coding tools into our engineering workflows across embedded, desktop, and cross-platform projects – but with clear boundaries and engineering accountability. Our experience shows that AI delivers the greatest value when applied to well-defined, repetitive tasks such as boilerplate generation, test scaffolding, documentation, and implementation of standard patterns. In these areas, AI can accelerate development without compromising system integrity.

At the same time, we have observed that AI-generated code often lacks awareness of system-level constraints, hardware-specific behavior, performance optimization requirements, and domain-specific architectural decisions. For example, in embedded and performance-sensitive desktop applications, AI-generated implementations may appear correct but introduce inefficient memory usage patterns, unnecessary abstractions, or blocking operations that degrade performance under real-world workloads. These issues are not visible at the code syntax level – they emerge only when engineers evaluate the system under realistic operating conditions. As a result, AI-generated output is always treated as a draft that requires validation, review, and refinement by experienced engineers who understand the full system context.

In practice, AI shifts engineering effort rather than eliminating it. It reduces the time required to produce initial implementations, but increases the importance of architectural oversight, code review rigor, and long-term maintainability planning. Our senior engineers focus not on writing more code faster, but on ensuring that systems remain coherent, scalable, and resilient as they evolve.

This approach allows us to achieve both speed and reliability. AI accelerates execution, while human engineers ensure correctness, sustainability, and alignment with long-term product goals. The result is not just faster delivery, but stronger software systems that remain maintainable and adaptable over time.

We view AI not as a substitute for engineering expertise, but as a force multiplier for disciplined engineering teams. The competitive advantage does not come from using AI alone—it comes from integrating AI into mature development processes guided by deep technical experience and system ownership.

8. Practical Recommendations for Engineering Leaders

If you’re a CTO, VP of Engineering, or senior technical leader trying to make smart decisions about AI adoption in your organization, here is a framework grounded in both the research and practical delivery experience.

- Establish AI code review standards before expanding AI tool access. Teams that adopt AI tools without updated review processes are the ones most likely to experience the code quality degradation GitClear documented. Define what “reviewed” means for AI-generated code before it reaches production.

- Measure lagging indicators, not just leading ones. Velocity and output volume are easy to measure. Technical debt accumulation, defect rates, MTTR on production incidents, and onboarding time for new engineers are harder to measure but more meaningful. Track both.

- Preserve and invest in senior engineering judgment. The highest-value thing a senior engineer does is not write code faster – it’s make better decisions. AI tools that allow organizations to thin out senior engineering capacity are creating hidden systemic risk.

- Apply AI tools selectively by task type. Boilerplate generation, test scaffolding, documentation, and code explanation are high-value, lower-risk use cases. Novel architecture, security-sensitive logic, and domain-specific business rules require human-led development.

- Audit AI tool usage for security exposure. Implement tooling to detect AI-suggested dependencies, review AI-generated code for known vulnerability patterns, and include AI tool usage in your threat modeling process.

- Treat AI adoption as a cultural change, not a tooling change. The organizations that benefit most from AI coding tools are those where engineers understand the tools’ limitations and take active ownership of the code they produce – regardless of how it was generated.

Final Thoughts: The Real Future of Software Development

The future of software is not AI-driven or human-driven. It is AI-assisted human engineering.

AI will continue to automate low-level tasks, reduce friction, and make development more accessible. But as systems become more complex and interconnected, the need for human judgment will increase, not decrease. Distributed architectures, AI-powered products, cybersecurity risks, and regulatory constraints all demand deeper engineering expertise.

In this future, the role of developers evolves rather than disappears. Engineers become system designers, technical strategists, and problem solvers. AI becomes infrastructure: always present, always helpful, but never autonomous.

For business leaders, the question is not whether to adopt AI in software development. The question is how to adopt it responsibly, without sacrificing quality, security, and long-term resilience.

AI can write code. But only humans can build software that lasts.

Ready to combine machine-level speed with human-level systems thinking? Contact us today to discuss your project and see how our AI-augmented engineering teams can help you deliver higher quality, faster.