One of the most common ways to make an application that reacts to natural language is to use Amazon’s NLU service Alexa. Alexa is a well known and widely used platform that analyzes a natural language, recognizes commands and passes them to an application. You can ask Alexa anything you want and it will answer you immediately (well, at least when you are not digging out some CIA secrets).

Alexa can also be used to play music (Amazon Music, iHeart, TuneIn, Spotify, Pandora) and books from Amazon Audible and even regular text books you own! In addition, Alexa gives you an ability to manage alarms, view shopping and to-do lists, and much more. You can even place new Amazon orders with it!

There is a wide range of areas where voice recognition could be used. You could use Alexa as submodule of your own app for iOS, macOS, tvOS to achieve all the benefits Alexa brought to this world. But that’s not all. With Alexa it’s possible to make your smart devices even smarter! Wouldn’t it be awesome to give voice commands to your smart speaker, TV, home theater, and any smart home devices like lights, refrigerators or any number of different IoT devices. With Alexa it’s possible to create custom voice skills for any feature of any device.

What is the Problem?

Creating an Alexa solution from scratch requires an enormous amount of time. Amazon provides a complex API with dozens of calls to deal with Alexa (which is using http2 in a very specific way). Not only development but also testing and certification by Amazon can take weeks, if not months. Sad!©

Unifying Integration

The DevelopEx company applies Alexa in different projects both for iOS and for Android devices. Naturally the idea came up to unify our integration process. That is why we have developed and tested our own custom Alexa Voice Service (AVS) library for iOS. With our library the process of integration is easy and painless. We just drag-n-drop the library into a project, then inject a couple of code lines to trigger a dialog. It takes 10 minutes and brings confidence that everything is working fine.

Interior Arrangement

The library has been written in Objective-C and consists of three modules:

- Dialogs

- Alerts

- Media content

The library handles numerous directives and triggers a large number of events. Of course, we considered the Amazon’s requirement certification. E.g. the library must provide continuous media playback for a minimum of eight hours. The DevelopEx AVS library can keep the playback alive for three days (maybe even more – we’ve just got tired of listening to all this music) being as consistent and stable as rock!

Another powerful feature is being able to work in the background. Our AVS library keeps the app alive for a long time even when it’s in an idle state (if we have connected an accessory device).

Now the AVS library suggests such interfaces:

- Start recording

- Stop recording (and send recorded)

- Cancel recording

- Log in to an Amazon account

- Alerts and timers

- Logs

- Export written commands and answers

- Block Alexa usage

For now there is enough to provide all necessary requirements for communication between various applications and Amazon services.

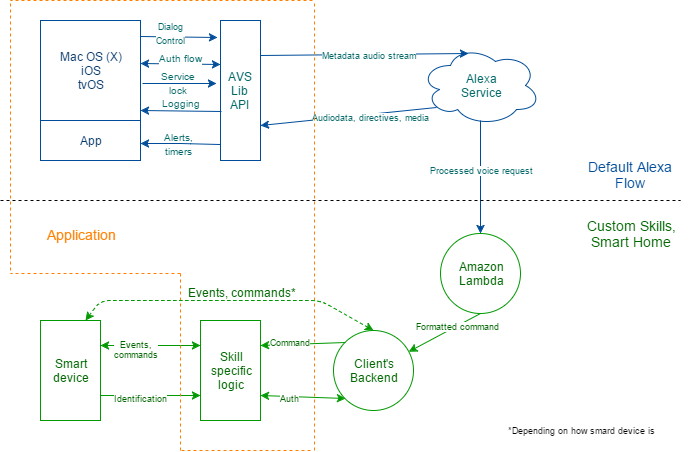

Interactions and data flow between a Device, an application, the AVS Library, Amazon’s services and iOS.

How Does the AVS Library Work?

- An application is waiting for dialog trigger event. When it comes;

- The application gives a command to AVS library to start voice recording;

- The library:

- syncs its state with Alexa service;

- writes sound from the system audio input;

- streams the recorded data to Alexa;

- Alexa treats the query and generates a corresponding directive (answer). The directive can contain a voice answer, a URL with any audio content (music, audiobook, news etc.) or only a JSON that defines what to do (make pause or mute for example) and necessary metadata;

- The AVS library handles the directive.

The AVS library can be easily integrated with both new projects and for existing ones that require Alexa functionality.

Vasyl Krevega,

iOS developer

Images used in the article: etcentric.org.

Do you have any link of the library?